Cochrane has launched an innovative study to test whether artificial intelligence (AI) tools can support or enhance evidence synthesis. We were delighted to receive 48 proposals from AI tool developers from our open call, but only have scope to evaluate two, and so a selection process was required.

Here, we outline that process and demonstrate what Cochrane values when deciding whether to use an AI tool for evidence synthesis. We encourage AI tool developers and users to consider these factors to inform their choices about how to responsibly and effectively use AI in evidence synthesis.

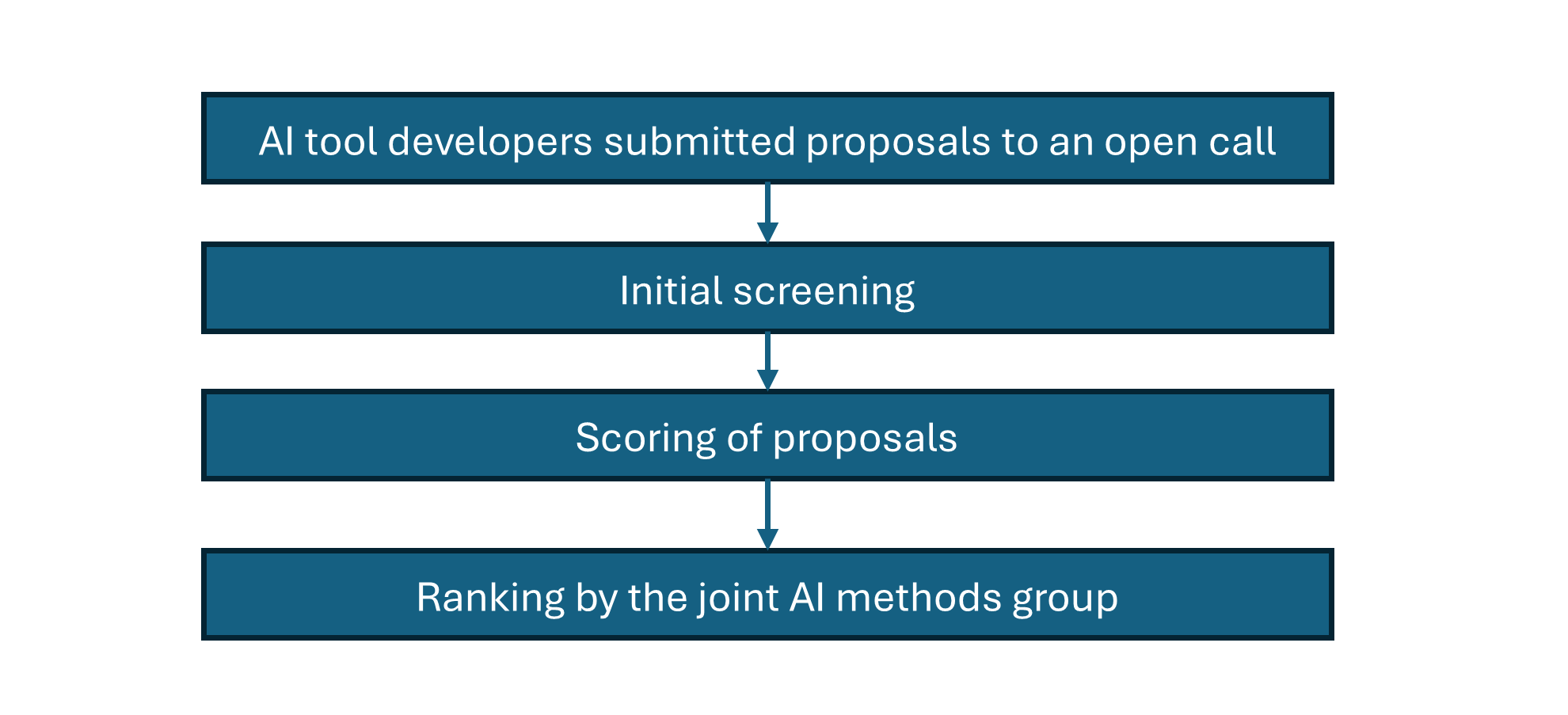

Selection process

It is important to note that assessment of proposals during the selection process was based on the information provided by the AI tool developers, rather than real-world use of the tools. This will be assessed in Cochrane’s platform study, and so we only expect to draw meaningful conclusions about the usability and suitability of the tools once they have been evaluated.

Initial screening

Submissions were screened and scored by staff in the Cochrane Central Executive Team based on:

- Cochrane workflow coverage: We focused on AI tools that applied to screening (based on abstracts and full-texts) and data extraction as research has indicated that application of AI tools, particularly large language model (LLM)-based AI tools, are most promising for these stages of the review process,

- Maturity: We decided to focus on tools with the potential to benefit evidence synthesists immediately, so we screened out any tools that are not already available for use, and have not already undergone some form of evaluation.

- Affordability: We excluded any tools that would not ultimately have been affordable to potentially scale up after the study .

Scoring

Submissions that passed initial screening were given scores against the following criteria (more details about these areas are detailed in the original call for AI tool proposals):

- Alignment with the Responsible AI use in evidence SynthEsis (RAISE) recommendations (assessed using the responsible handover framework in RAISE 3)

- Evaluation standards and validation approaches

- Tool usability and alignment with Cochrane expectations, including methodological standards and review workflows

- AI tool developer commitment to capacity-building, support and an interoperable infrastructure

The first two criteria held double the weight to reflect their importance.

Final ranking

Of the 18 submissions that met the initial screening criteria, the nine submissions with the highest scores were presented to seven volunteers from the joint AI Methods Group, who make up the scientific advisory group for the platform study. Two tools were removed during discussion due to a lack of publicly available evaluation studies.

Members of the advisory group independently ranked the remaining seven tools and the top two tools were selected for the platform study. The others AI tools in the ranked list act as a queue of tools that can be added to the platform study if the initial candidates fail to meet the expected standards. These thresholds are outlined in the study protocol, which will be shared soon.

Factors that informed the selection of tools

As proposals progressed through the process, general themes around their suitability emerged. We encourage AI tool developers to focus on these factors to increase their potential for use across the evidence synthesis community.

Coverage of systematic review workflows

Preference was given to tools that supported both study screening and data extraction, with a desire to avoid Cochrane authors needing to become familiar with multiple new tools. However, each application of AI in the tool had to be assessed and validated on its own merit, and so it’s important that the AI tool developers think holistically about all the AI systems embedded in the tool, how users will interact with them, and whether they meet the expected standards. We also focused on AI tools that were committed to applying the standards of rigor and integrity expected of Cochrane reviews.

“Ensure the development team appreciates the principles that underpin reliable evidence synthesis and design AI that supports these principles and does not undermine them.” – RAISE 1, AI tool developer recommendation 3.1

“Each assessment is specific to function and context; for platforms or tools with multiple embedded AI systems, each AI system requires a separate assessment. (For example, a systematic review platform might contain several AI systems for different tasks such as screening and data extraction.)” – RAISE 3, p.11

Open-source vs proprietary models

We had hoped to prioritize open-source tools developed by the academic community over commercial offerings, because:

- They impose less financial cost to the Cochrane community

- They better facilitate transparent and reproducible methodology, especially if they do not rely upon LLMs from third-party providers

- Cochrane can freely adapt them to our workflows and methodologies

However, we received fewer proposals for mature open-source tools than we had anticipated, and this consideration had to be weighed alongside the other factors that informed our decision. Only one open-source tool progressed to the list of seven that were ranked by the joint AI Methods Group, and was not selected as an initial candidate because it does not support data extraction.

As open science practices align with Cochrane’s ethos, we are keen to hear from more developers of open-source tools, and encourage all tool developers to align with the practices outlined in RAISE, where possible.

Independent of software licensing models, as part of finalizing the decision of which to use, we requested full funding and declarations of interest , and considered whether any amounted to a conflict that would prevent Cochrane piloting their tool.

“... Transparency can be maximised if the source code and other materials used to develop and run the models are also openly available. These should ideally adhere to FAIR (Findability, Accessibility, Interoperability, and Reuse) principles for data and FAIR4RS principles for research software. Additionally, confirm how it can be accessed, along with any restrictions on its use or licensing considerations.” – RAISE 1, AI tool developer recommendation 3.6

“Be transparent about your funding sources and declare any financial and non-financial interests.” – RAISE 1, AI tool developer recommendation 3.14

Transparency of existing evidence base and substantiated claims

We only considered tools with publicly accessible validation studies. Any without, including those that required non-disclosure agreements before they could be shared, were not shortlisted.

We focused on AI tools with validation studies that were methodologically rigorous and transparently reported to address concerns about replicability. We also considered the performance claims based on the methods, and whether they were potentially unsubstantiated due to the lack of details and methodological limitations. We also considered whether any assumptions (often unstated) in validation studies indicated whether performance within specific topic domains would generalize across Cochrane’s scope of health and social care.

“Conduct training, testing, and validation evaluations using appropriate methods, including key performance metrics, adhering to protocols, interpreting data judiciously, and drawing reasonable conclusions based on the findings” – RAISE 1, AI tool developer recommendation 3.4

“Ensure complete, transparent and public reporting of training, testing, and validation evaluations, including training sets, detailed methods and the publication of null results, preferably published in peer-reviewed journals” – RAISE 1, AI tool developer recommendation 3.5

“Report how the system works; do not hide reasonable openness behind ‘commercial confidentiality’ where this is unnecessary.” – RAISE 1, AI tool developer recommendation 3.9

“Do not market or otherwise recommend a tool for use in evidence syntheses if you cannot demonstrate robust evaluation(s) that support the recommendation.” – RAISE 1, AI tool developer recommendation 3.16

“Respect the accuracy standards expected by the evidence synthesis community. Avoid promoting systems that undermine these principles. For instance, do not develop, promote, or deploy systems that may amplify publication bias or generate biased and misleading summaries.” – RAISE 1, AI tool developer recommendation 3.19

Human oversight

While the effectiveness of the exact human oversight mechanisms of specific tools will only be evaluated later in the platform study, at this stage we prioritized proposals that stated a commitment to human-centred design to ensure users are aware of all automated decisions and can override these manually. Tools with agentic workflows that execute multiple tasks autonomously without any human oversight were excluded.

“Facilitate the use and development of human-centred AI systems that emphasise human oversight. In this context, ‘human-centred’ means that humans should maintain control over the AI system while having agency and oversight of its operation.” – RAISE 1, AI tool developer recommendation 3.20

Dependency on user-defined prompts

For AI tools powered by LLMs, their performance often depends on how the models are prompted. In the workflow of some tools, the task of engineering effective prompts falls on the user, with no guiding user interface. We do not expect Cochrane authors to be experienced in prompt engineering, which raised concerns about whether the performance claims for these tools would apply to real-world use where authors must write prompts specific to their own review. We deprioritized tools where we had concerns that Cochrane author teams would not have the technical skills to use them reliably with the offered training, documentation and support.

“Provide clear guidance to users and assist them in being accountable for their use of your AI system. Comprehensive user guidance enhances transparency, facilitates accountability, promotes appropriate and ethical use, and ultimately helps evidence synthesists make informed decisions about their usage.” – RAISE AI tool developer expectation 3.11

“Writing prompts for a language model is building an AI tool. ... when you are designing a set of prompts (for example, to carry out screening or risk of bias assessment), you are designing a machine learning model (manually) for your particular task.” – RAISE 2, Box 1, p.5

Compliance with legal and ethical requirements

AI tool developers needed clear and public terms of use. Tool developers who did not demonstrate compliance with legal and ethical requirements were excluded. Examples included:

- Use of uploaded content to train the system or tools that impose limitation on the use of the outputs

- Features that streamline the import of full text articles into a tool, where we weren’t confident that users would be assisted to avoid potential copyright infringements

“Awareness and adherence to national and international guidelines, such as the EU AI Act, especially regarding the technical information about models you have built or fine-tuned.” – RAISE 1, AI tool developer recommendation 3.7

“Do not require users of your AI system to relinquish rights over or share their data for future system development and evaluation. Ensure your expectations align with existing and emerging standards for regulation and use.” – RAISE 1, AI tool developer recommendation 3.27

“Ensure ethical, legal, and regulatory standards are followed during AI model development, such as avoiding copyright infringement or unlawful use, and clearly outline any ethical or legal considerations that users of your AI system should be aware of.” – RAISE 1, AI tool developer recommendation 3.28

Next steps

The selection process outlined here is part of Cochrane’s 2026 platform study of AI tools. All decisions about inclusion in the study were made on the information and evidence provided by the AI tool developers. Further learnings on the usability and suitability of the tools will come from the later stages of the study, which will be shared when available.

The selection process was necessitated and shaped by the finite scope and short timelines of the study. For a more general overview we refer AI tool developers to the Responsible Use of AI in Evidence Synthesis recommendations and guidance as well as Cochrane’s resources on evidence synthesis methodology.

This is a first step, as we pilot new approaches for producing more timely evidence synthesis in a way that does not compromise on integrity and rigor. We are grateful for all the efforts of AI tool developers who submitted proposals and look forward to collaborating further with those who are committed to responsible development and adoption of AI tools. Our aim is to ensure that the Cochrane community can produce more accessible, trusted evidence and advocate for its use to deliver better, more equitable health for all.

Part of this work was supported by the Wellcome Trust grant number 323143/Z/24/Z

Find out more

Cochrane launches innovative study to assess AI tools for evidence synthesisCochrane announces selected AI tools for innovative platform study What makes Cochrane’s new AI study innovative?

Are you an AI tool developer interested in using Cochrane data?

AI developers are subject to the same terms and conditions for re-use as human users. As such, AI developers and companies must obtain authorization before using data from Cochrane reviews for AI development, training, or implementation, and all terms of the licence associated with each review apply; no implied permission exists without a licence. Any uses of Cochrane data must adhere to Cochrane’s data re-use terms and conditions, including appropriate citation and accreditation.

For more information, see Wiley’s Statement on Illegal Scraping of AI Copyright.